Cross-Cultural Tone Sentinel: Mastering Multilingual Sentiment & Escalation Precision

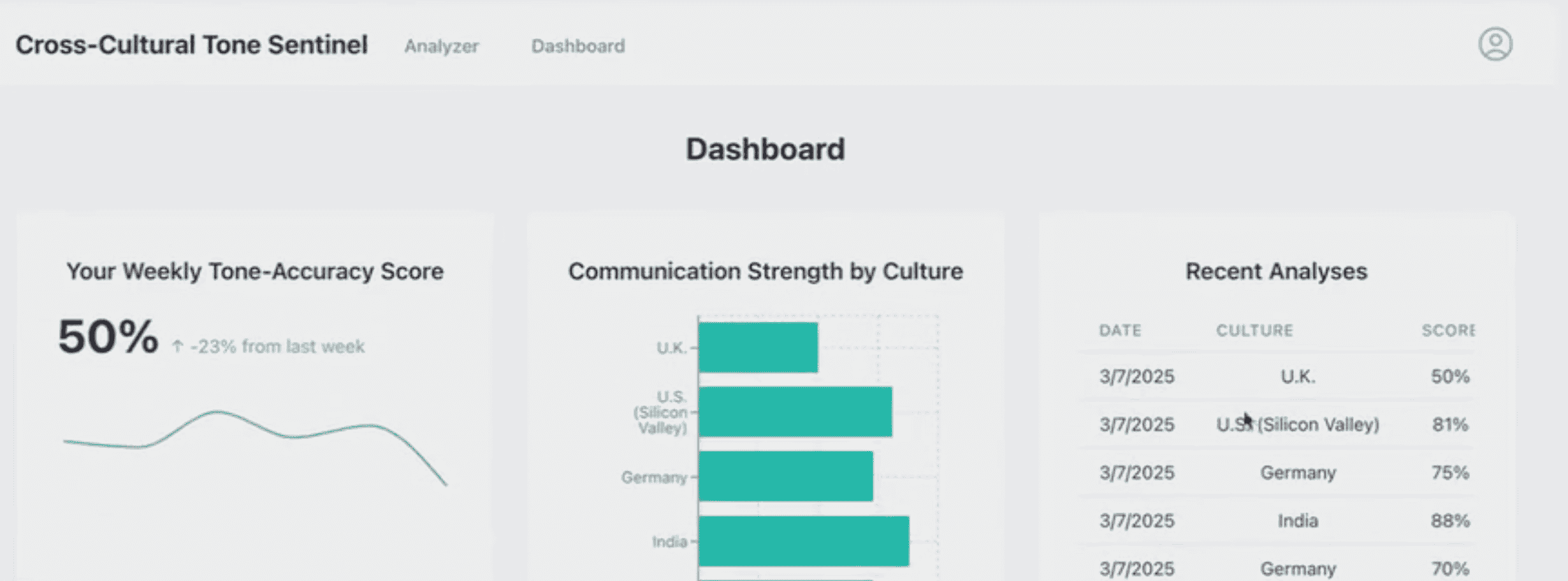

Achieved 95% escalation detection precision across 25+ languages with culturally aware sentiment mapping — reducing churn by 18%.

THWORKS built the Cross-Cultural Tone Sentinel — an AI sentiment engine that detects intent, politeness levels, and escalation risk across 25+ languages while accounting for regional cultural nuances. Unlike standard sentiment tools that misclassify 'polite frustration' in Asian markets as neutral or 'direct feedback' in Northern Europe as aggressive, this system uses a Cultural Tone Matrix to achieve 95% escalation precision and reduce customer churn by 18% in non-English markets.

The Challenge: 22% Churn in Non-English Markets Due to Cultural Misreads

A global retail enterprise suffered a 22% churn rate in non-English speaking markets because their legacy sentiment tool consistently misread cultural context. Polite frustration expressed through Japanese honorifics was categorized as 'neutral.' Direct Scandinavian feedback was flagged as 'aggressive.' These misclassifications triggered wrong responses — either ignoring genuinely upset customers or escalating satisfied ones — leading to public PR incidents on social media in APAC markets.

In a global marketplace serving 40+ countries, one-size-fits-all sentiment analysis is a business liability. The client needed a system that could decode cultural subtext in real time — distinguishing between a German customer's direct complaint (actionable but not angry) and a Japanese customer's restrained dissatisfaction (polite but churning) — without overwhelming support teams with false positives.

Our Solution: Cultural Context Layer with Politeness Weighting

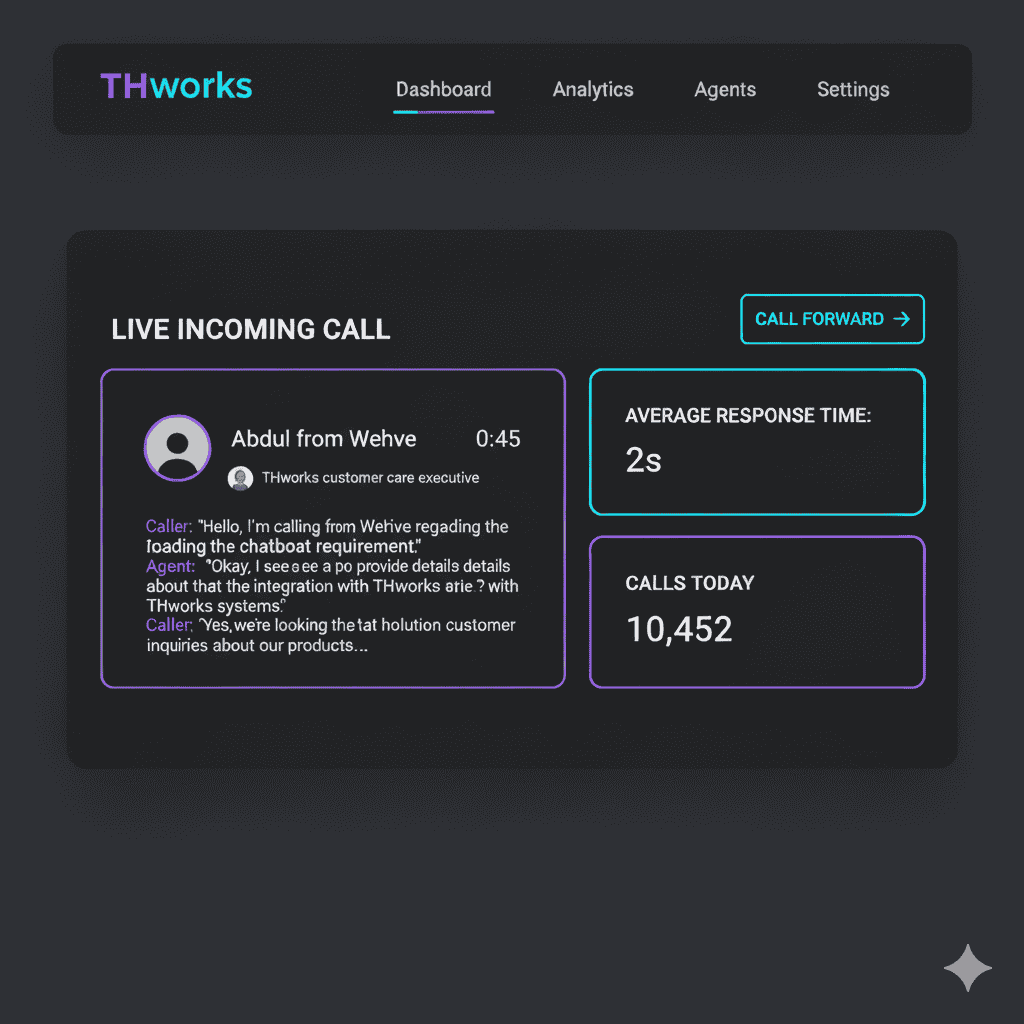

We built a multi-stage NLP pipeline with a 'Cultural Context Layer.' Raw text is first normalized and language-detected, then passed through a fine-tuned Transformer model that maps linguistic tokens to a regional Tone Matrix — replacing the traditional binary positive/negative sentiment score with a multi-dimensional cultural intent profile.

Our approach moved beyond keyword matching to semantic intent mapping with cultural calibration. We implemented 'Politeness Weighting' — a feature that adjusts sentiment scores based on regional communication norms. Japanese honorifics increase the politeness baseline, making frustration signals more significant when detected. Germanic directness is normalized, preventing false aggression flags.

Key Technical Decisions

Native Multilingual LLMs: Used Gemini-class models with superior reasoning in low-resource languages, avoiding the accuracy loss of traditional translate-then-analyze workflows that strip cultural context during translation.

Regional Escalation Thresholds: Developed a configurable logic gate that applies different escalation thresholds per region — a 'latent frustration' score of 0.6 triggers handoff in Japanese markets but requires 0.8 in direct-communication cultures.

Human-in-the-Loop Retraining: Integrated a dashboard where agents correct tone misclassifications, feeding corrections back into the model daily — improving accuracy by 3-5% per month during the first quarter.

Results: From 40% Missed Escalations to 95% Detection Precision

Before

Standard sentiment analysis missing 40% of subtle escalations in APAC markets. Cultural misreads causing PR incidents. 22% churn in non-English regions. Support teams flooded with false-positive escalations.

After

95% escalation detection precision across all regions. Automated culturally-aware responses matching local communication expectations. 18% churn reduction. CSAT scores up 34% in previously underperforming markets.

Technology Stack

"The Tone Sentinel changed how we view global support. For the first time, our automated systems actually understand our customers in Tokyo and Berlin as well as they do in New York. The escalation accuracy is uncanny — we caught a brewing PR issue in our Korean market 3 hours before it hit social media."

Frequently Asked Questions

Common questions about this project and our approach.

The model is trained on culturally annotated datasets that include localized sarcasm patterns, idioms, and communication norms. It analyzes the full session context rather than individual messages — so a Japanese 'thank you very much' after a complaint thread is correctly flagged as frustrated sign-off, not genuine gratitude.

Related Case Studies

Optimize Your Global Customer Intelligence

Let's discuss how we can solve your technical challenges with the same precision and impact.

Optimize Your Global Customer Intelligence